Summary of Chapter 5

- Regular expressions allow to extract text that matches a pattern

- You write the pattern using a special notation (metacharacters)

- You use the pattern to search in the text, producing a “match” or extracting all occurrences

Uses:

- Parse semi-structured files

- Extract information from free text

- More sophisticated find-replace

- …

Strengths:

- One line of code can do a lot!

- Very fast

- Well-implemented in Python (cfr. R)

Weaknesses:

- Very difficult to debug

- Can be hard to write the “perfect” regex

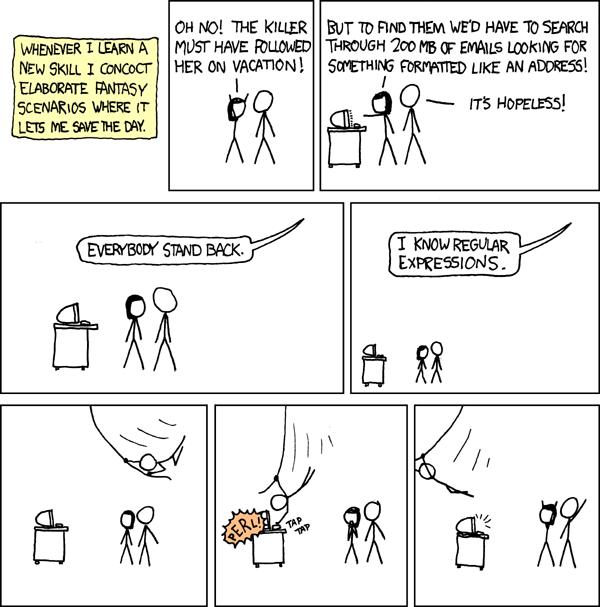

What you want to be doing:

What you don’t want to be doing:

Basics

import re # need to load module

# "literal" match --- match exactly what you wrote

re.findall(r'a', 'ciao') # returns ['a']

re.findall(r'99', '1099099') # returns ['99', '99']

Metacharacters

\nmatch a new line\tmatch a tab\smatch a space\dmatch a digit (0123456789)\wmatch a “word character” (a-z, A-Z, _, 0-9).match any character (note: no backslash — to match a dot use\.)[abc87]match any of the characters between the brackets[a-z]match any character betweenaandz[^ATGC]do not match charactersATGC\bmatch word boundary (used to detect full words)

re.findall(r'\w\n\w', 'This is a line\nThis is another line')

# returns ['e\nT']

re.findall(r'\d\.\d.', '12.3456')

# returns ['2.34']

Quantifiers

?match 0 or 1 time*match 0 or more times+match 1 or more times{5}match exactly 5 times{5,}match at least 5 times{5, 7}match between 5 and 7 times (included)

re.findall(r'\d*\.\d{,2}', '123.45678')

# returns ['123.45']

re.findall(r'\d*\.\d*', '12345678')

# returns []

Anchors

$match at the end of the line^match at the beginning of the line

Alternations

a|bmatch eitheraorb

Raw strings

The r in front of the string means “do not interpret special characters”. Note the difference between:

print('my long \n line')

which returns

my long

line

and

print(r'my long \n line')

which returns

my long \n line

Groups

Groups are defined by round brackets. The can be used to:

- Organize your match (i.e., match separate pieces)

re.findall(r'(\d+\.?\d*)\s[\+\-]\s(\d+\.?\d*)i', '50.3 + 25i')

[('50.3', '25')]

- To capture text flanked by known regions

re.findall(r'Error: ([\w\s\_]+)', 'Error: page not found --- terminate')

['page not found ']

- To build complicated parts that would be difficult to write otherwise (e.g., complex alternations)

Functions in re

re.findallreturns a list of matches (groups are organized in a tuple)re.searchfinds a match; returns a match objectre.compilestores the pattern, improving the speedre.splitsplit lines according to regexre.subsubstitution guided by regex

Greedy vs non-greedy

? * +are all greedy: they match as much as possible- to make them “reluctant”, add a

?

re.match(r'.*\s', 'a sentence with multiple words').group()

'a sentence with multiple '

re.match(r'.*?\s', 'a sentence with multiple words').group()

'a '

Warmup exercise

We are going to exploit a small bug feature of Scopus.

- Go to Scopus.com

- Click on “Affiliations”

- Search for “University of Chicago”

- Click on “University of Chicago”

- Click on the number of Authors in the top-right panel

- The page should show the twenty most prolific authors with an affiliation to U of C

- Go to the URL bar in your browser, and modify the address: instead of

resultsPerPage=20&useresultsPerPage=2000& - Now you have information on 2000 authors!

- Save the page in your sandbox as

scopus.html - You are now the proud owner of a list of the 2000 most prolific authors at the University

- For each author, use regular expressions to extact:

- Name

- Number of papers

- The first in the list of disciplines

-

Which discipline is most represented? Take the list of authors you’ve just produced, and tally the number of authors by discipline.

- What is the average number of papers for these authors?

Here’s the solution

Note: We have parsed HTML using regex, which is completely unnecessary; there are specialized tools for this task. We’re going to look at one of them in week 6.